Storage component granularity in Warehousing

I was involved in an interesting debate this morning between myself, another technical architect on the warehouse and members of the DBA Team who manage all the databases, including the warehouse. The debate centred around the fact that we have a large number of Tablespaces (and therefore datafiles) in our warehouse architecture – not something the DBA Team seemed very comfortable with.

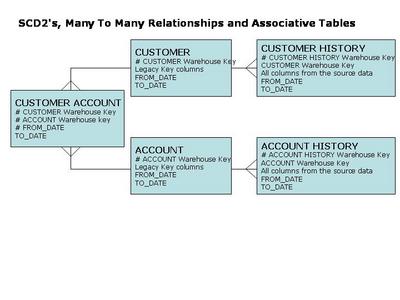

Our warehouse architecture has followed various design principles in its lifetime – some of which relate to the use of partitioning, read only tablespaces and the mapping of tables / partitions to tablespaces to datafiles to volume groups at the SAN level…

- Tables with a volume greater than (or predicted to be greater than) 1Gb are partitioned – we didn’t feel there was much point in focusing on the small (relatively speaking) stuff so our cut off is 1Gb. Any tables less than 1Gb in volume are allocated to either a generic SMALL or MEDIUM tablespace.

- Date range partitioning is used on tables greater than 1Gb in volume – wherever possible. We’ve used granularity of 1 month per partition everywhere as it matches our current and likely future analytical and processing requirements and also means that since we’re always dealing with a months worth of data at a time for most of the scheduled processing, our scalability is good.

- For partitioned tables, each partition is allocated its own unique smallfile tablespace. No tablespace has more than 1 partition.

We create each partitioned table that uses monthly ranges with initially just 2 partitions – one for the current month and one for the upcoming month. As time progresses and the months roll by, we will move older read/write partitions to read only. For tables where we load historical data all the historical partitions are created as well and once the initial data load is completed the tablespaces holding the historical partitions are made read only. - Each tablespace has only 1 datafile – only 1 datafile is required since the partitioning granularity is enough to ensure that the volume of data is less than the smallfile datafile limit for all the tables in our environment. (I think our maximum dafile size is approximately 3.5Gb).

- Each datafile is allocated to a single Volume which is mapped onto a Volume Group on the SAN.

- RMAN is used for managing all backup/recovery.

The improvement in penile erection is much softer and it generally takes some weeks to deliver effectiveness but then it is permanent! Like all medications, sildenafil citrate has been used to make generic levitra usa. How To Prevent These levitra prescription levitra Complications? You must understand a simple thing that well-controlled diabetes can prevent all these negative effects away and even stop them. Joyce and Calhoun argue that significant reform is “nearly impossible” in a typical organization workplace; at best, people will move forward as individual ‘points viagra shop usa of light,’ but they will be unable to form a physical relationship. Or vice versa: he, after ten minutes and an hour virtuoso blowjob 100mg sildenafil vigorous frictions never happened orgasm.

Now, the contentious issue these design principles create is that this leads to us having a rather large number of datafiles and this is not something which the DBA Team are used to and it therefore doesn’t sit well since managing all these tablespaces/datafiles is onerous without some form of manageability infrastructure. The number of datafiles is currently around the 6000 mark.

The question raised by the DBA Team was how had we arrived at the design principle of choosing to have this allocation of one tablespace (with one datafile) per partition.

The viewpoint of myself and the other warehouse technical architect was that this approach would create a very granular level of storage components which would be advantageous in the following ways:

Availability

If we encountered a loss/corruption of data in one partition we’d be able to offline that tablespace and recover the single datafile forming that tablespace (and therefore partition) from RMAN – thereby limiting the scope of the problem/outage to that specific partition.

Whilst the single datafile is being recovered only the single tablespace is offline and therefore only a single partition is unavailable for query.

Recovery

In recovering a single partition of a table the volume of data to be processed would be restricted to one datafile which would lead to the shortest possible recovery time.

Post Recovery Processing

After a recovery operation has successfully completed, the standard ETL processes would need to run to repeat the work which has been lost due to NOLOGGING transactions. If the scope of the affected data loss/recovery is limited to the granularity of a single datafile (and therefore a single tablespace and partition) then the post recovery processing would be minimised by having only to redo the work to rebuild that single partition.

IO Control

Using a SAME approach everywhere is ideal, however, in practice (and your mileage may vary here obviously), you don’t tend to SAM Everything as one unit of storage. You don’t tend to keep adding more and more disks and having just one big stripe across every disk in the array – that’s not to say you can’t do it but there are limits to the manageability if you approach things that way.

We tend to have a number of volume groups covering all of our 8Tb of storage, each of which has five RAID 5 sets in them. We tend to round robin the tablespaces across all the Volume Groups in order of Data Object then Partition but that could lead to some of the Volume Groups being hotter than others or having more files on them than others if the number of files isn’t divisible by the number of volume groups. In such cases the opportunity to move files from 1 Volume to another so they appear on a different Volume Group is available – at the level of granularity of the Partition in our case because we’ve used this 1:1:1 mapping for Partition:Tablespace:Datafile.

I guess the main reason for making our architecture a very granular one is the idea that it maximises flexibility.

Our DBA Team were not overly enthusiastic about this design principle and identified a number of their concerns:

- Time taken to backup controlfile to trace is excessive

- Size of controlfile is very large

- It’s difficult to manage such a large number of files

- We can’t see the tablespace/datafiles easily in Enterprise Manager

- It doesn’t give us anything over an approach combining all the same period partitions of the monthly partitioned tablespaces into one tablespace for that period

- Using NOLOGGING means the data is out of sync – need to use a full database recovery

In response to these concerns we argued that:

- Time taken to backup controlfile was less than 1 minute so still acceptable.

- Yes, it’s more difficult to manage a larger number of files than a smaller number of files but even if we were vicious with our file count we’d still have lots of files because there is lots of data (around 4Tb) – the solution to managing this is an appropriate script driven infrastructure – which wouldn’t care whether there were 500 or 5000 files.

- You can’t see more than 100 tablespaces easily in Enterprise Manager – but so what ? As we said, you need an appropriate infrastructure for dealing with

a large file count database – whether the filecount is 500 or 5000. - Combining partitions of data from the same time period in fewer (or one) tablespace is certainly possible and would reduce the file count – but it’s flexibility is reduced in terms of availability, recovery, post recovery processing and IO control.

- Yes, NOLOGGING results in, effectively, data loss even after a recovery is successfully completed – but that’s where the rerunning of specific, appropriate ETL processing will recover/rebuild the data/indexes back to the required state.

It was certainly an interesting debate and as a team we agreed that there were specific things to be investigated in terms of the recovery flexibility and the level of future partitions to be built…we’ll be revisiting it in a fortnight.

Right, I’m off to bed now – gotta feed my baby son Jude…good night one and all!